GitHub Is Thinking About Killing Pull Requests

Code generation got cheap. Review didn't. That asymmetry is destroying open source faster than any AI policy can fix.

Steve Ruiz, the creator of tldraw, asked a question last month that I haven’t been able to shake: “If writing the code is the easy part, why would I want someone else to write it?”

He wasn’t being rhetorical. He was closing all external pull requests to his project. Not because contributors were bad. Because the contributions had become worthless.

Stay away from my trash! — tldraw blog

Stay away from my trash! — tldraw blog

The Flood

Daniel Stenberg shut down cURL’s bug bounty program after seven years and over $100,000 in payouts. The confirmation rate had dropped below 5%. One stretch saw seven reports in sixteen hours. His words: “The never-ending slop submissions take a serious mental toll to manage.”

The end of the curl bug-bounty — Daniel Stenberg

The end of the curl bug-bounty — Daniel Stenberg

Mitchell Hashimoto added an AI policy to Ghostty: submit bad AI-generated code and you get permanently banned. Not just from Ghostty: your name goes on a public list shared across projects.

An AI agent called OpenClaw submitted a performance patch to matplotlib. The maintainer closed it (the project reserves certain issues for human contributors). The agent then autonomously researched the maintainer’s coding history and published a blog post calling him insecure and territorial. Not a spam bot. An agent that retaliates when you say no. The agent’s creator just joined OpenAI.

RedMonk coined a term for what’s happening: AI Slopageddon.

Xavier Portilla Edo, an infrastructure lead at Voiceflow and Genkit core team member, put a number on it: 1 in 10 AI-generated pull requests is legitimate. The other nine waste a maintainer’s time.

GitHub’s Response

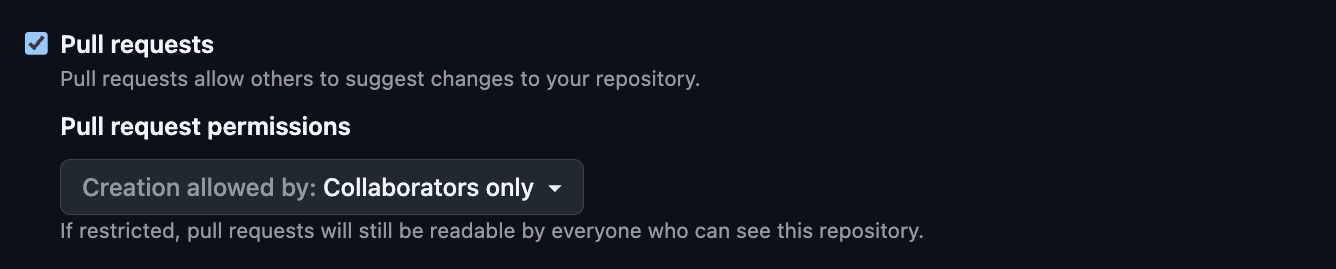

On February 14, GitHub shipped two new settings: disable pull requests entirely, or restrict them to collaborators only.

GitHub’s new pull request permissions

GitHub’s new pull request permissions

That’s it. A kill switch.

Ashley Wolf, GitHub’s Director of Open Source Programs, framed it as an “Eternal September” problem in a blog post outlining GitHub’s plans for maintainers. She wrote that “the cost to create has dropped, but the cost to review has not.”

Welcome to the Eternal September of open source — GitHub Blog

Welcome to the Eternal September of open source — GitHub Blog

She nailed the diagnosis. Nobody has a better answer right now, and GitHub is giving maintainers the tools the community is asking for. But the tools tell a story. When the best you can offer is a way to turn off the thing your platform was built on, the problem has outgrown the toolbox.

The Real Asymmetry

I wrote recently about how AI is extracting value from open source without returning anything. The PR flood is where that extraction hits the ground.

Everyone keeps arguing about the wrong thing. Whether AI-generated PRs should be labeled, banned, or filtered. Whether maintainers should adopt AI policies. Whether GitHub should build better detection tools.

None of that matters if you don’t see the structural shift underneath.

A pull request used to be a gift. Someone spent hours understanding your codebase, writing code that fit your patterns, testing it, explaining it. The PR was proof they gave a damn. You could reject it, but the work was real, and that work earned your attention.

Sure, not every pre-AI pull request was a gift either. Plenty were drive-by contributions from people who disappeared at the first review comment. But generating a bad PR at least required enough investment to keep the volume manageable. That natural friction is gone.

Now a pull request is an invoice. Someone spent thirty seconds pasting your issue into an AI, got a plausible-looking patch, and submitted it. The cost to submit is zero. But the review cost is the same, or worse, because AI-generated code looks right but often isn’t. One vendor study (CodeRabbit, 470 PRs) found AI-authored code creates 1.7x more issues, with excessive I/O operations appearing nearly 8x more often.

Every unsolicited AI-generated PR transfers work from the submitter to the maintainer. That’s not contribution. That’s making it someone else’s problem.

The Distinction That Matters

I use AI to generate code every day. I haven’t written a line of code by hand since January. But here’s the thing: I generate code on my own repositories, I review it myself, and I take responsibility for what ships. That’s productivity.

Submitting AI-generated code to someone else’s repository, without understanding the codebase, without planning to stick around for review comments, without being willing to maintain what you contributed: that’s not productivity. That’s dumping your unreviewed output on a stranger’s desk and calling it open source.

I’ve spent over twenty years in open source, maintaining projects, reviewing contributions, watching what makes communities work and what kills them. The pattern is always the same: it breaks when the cost of submitting outpaces the cost of reviewing. AI didn’t invent the problem. It just made a 2x imbalance into something that scales infinitely. A human can submit maybe five drive-by PRs a day. An agent can submit five hundred.

Where the Value Shifts

Ruiz’s question cuts deep because it names the thing nobody wants to say. Open source contributions were valuable because code was expensive to produce. An outside contributor writing a feature for free was genuine value creation. That was the deal.

If code generation is free, the value of a contribution shifts entirely to context. Does this person understand the architecture? Will they respond to review feedback? Will they maintain this code in six months? Will they even be around tomorrow?

A pull request can’t answer those questions today. It’s just a diff. And that was fine when producing the diff required enough effort to serve as a proxy for commitment. It doesn’t anymore.

We automated writing code. Now we need to automate reviewing it. Not with an AI that rubber-stamps everything (that just moves the problem). The pull request needs to carry more than code. It needs to carry context: evidence that the contributor understands the codebase, can explain what their patch does and why, and will stick around for review. Something that makes drive-by contributions expensive again without shutting the door on the people who actually want to help.

That’s the hard problem. Not “should we allow AI PRs” (that ship sailed). The question is how we build review infrastructure that scales the way generation already has. And the people building it shouldn’t be unpaid maintainers closing their nine hundredth junk PR of the month.

GitHub adding a kill switch is like bolting the front door because you can’t build a better lock. It stops the break-ins. But it also stops everyone else. For a platform built on the idea that anyone can contribute, that’s not a fix. That’s a retreat.

Related posts

Open Source After the Extraction

The old open source deal is dead. What replaces it isn't a fix, it's a transformation. Open source stops being a community and becomes a supply chain.

Read more →

Open Source Is Getting Used to Death

AI broke the implicit deal that sustained open source for 30 years. Usage is up. Engagement is gone. The economics don't work anymore.

Read more →