Your CI Pipeline Wasn't Built for This

AI writes code 10x faster than humans. CI still runs at the same speed, fails for the same flaky reasons, and costs more every month. Something has to give.

From where I sit at Mergify, the trend is obvious: same teams, same repos, no headcount changes, but more pull requests and more CI jobs than a year ago. The driver isn’t a mystery. AI started writing code.

And the number will keep climbing. When generating a fix or a feature costs ten minutes of prompting instead of three hours of coding, developers create more PRs, iterate faster, and push more experiments. Creating code got cheap. Testing it didn’t.

The Bill Nobody Budgeted For

More code means more tests. More tests means more CI minutes. More CI minutes means a bill that grows faster than the team.

This catches people off guard because the promise of AI-assisted development was productivity: do more with less. And that’s true on the code side. But CI doesn’t care who wrote the code. Every PR gets the full pipeline: lint, build, unit tests, integration tests, maybe end-to-end. Human PR or AI PR, same cost.

When you were shipping five PRs a day, running the full suite each time was fine. When you’re shipping thirty, you’re running the same tests six times as often, and most of those runs produce no new information. You’re paying for redundancy that made sense at human speed and makes no sense at AI speed.

The solution isn’t to skip tests. It’s to stop running tests that can’t tell you anything new. The usual advice (test selection, caching, fast checks before expensive suites) isn’t new. It’s just not how most pipelines work, because most teams configured them when five PRs a day felt busy.

Flaky Tests Are Poisoning Your Agents

Cost is painful but manageable. You can throw money at it. For a while. The real problem is signal.

On our main branch at Mergify, where the code is already merged and most runs are about integration stability, roughly 90% of CI failures are transient: infrastructure hiccups, network timeouts, resource limits, the kind of failures that disappear when you hit “retry.” That’s high, but our test suite is large and infrastructure-heavy. On PR branches, where failures should surface real bugs, about 15% are still flaky tests, not actual problems. Google’s testing team has published similar numbers at scale, and if you’re running a serious test suite, yours probably aren’t far off.

When a human developer sees a red build, they open the logs, recognize the flaky test, swear under their breath, and hit retry. They carry context. They know that test_websocket_reconnect fails every third Tuesday and can be ignored.

An LLM doesn’t know that.

Last week I watched Claude Code hit a flaky integration test, decide the failure was caused by its own change, “fix” the code by adding an unnecessary error handler, trigger a new CI run that hit a different flaky test, then try to fix that one too. Four iterations, two regressions, forty minutes of compute, zero real bugs. I killed the session and hit retry myself. Green on first try.

That’s the loop. At human pace, flaky tests are an annoyance. At AI pace, they’re a multiplier on wasted compute and wrong decisions. The LLM is making choices based on bad signal, and it’s making them at machine speed.

Yes, agents will get smarter about this. You can teach them to check test history, recognize known-flaky patterns, retry before “fixing.” But that pushes CI knowledge into every agent, every tool, every workflow. It’s the wrong layer. The CI system should know which signals to trust.

From Status to Signal

Today, CI is a gate: green means go, red means stop. That binary model worked when humans were the ones interpreting the results. It breaks when the consumer of CI output is an LLM that takes “red” at face value and starts debugging a ghost.

What CI actually needs is to become aware of its own reliability. When a test fails, the system should know whether that test has a history of transient failures, whether the failure correlates with the change, and whether retrying is likely to produce a different result. That context exists in build history and test failure patterns. Almost no pipeline uses it.

The next generation of CI needs to output signal, not just status. Not “failed” but “failed, likely flaky, recommend retry.” Not “13 tests failed” but “2 failures correlate with your change, 11 are known flaky.” Give the LLM (or the human) the information to make a good decision instead of a fast one.

This matters beyond individual PRs. Merge queues depend on CI signal to decide what lands in main. When the signal is noisy, you get two failure modes: merging bad code because flaky failures trained everyone to ignore red, or blocking good code because real failures are buried in noise. Both get worse as volume increases.

The Real Waste

Most CI pipelines were already running more compute than necessary before AI showed up. Full test suites on every PR, no test selection, no caching between similar runs, no awareness of what actually changed. The waste was tolerable at human speed because the volume was low.

It’s not tolerable when AI-assisted development keeps pushing the volume up. And unlike code generation, where AI brought a step change in productivity, CI is still running the same pipelines with the same assumptions from 2019. AI didn’t create the flaky test problem or the redundant pipeline problem. It just made both impossible to ignore.

Your CI pipeline was built for a world where code was expensive to write and cheap to test. That world is gone.

Related posts

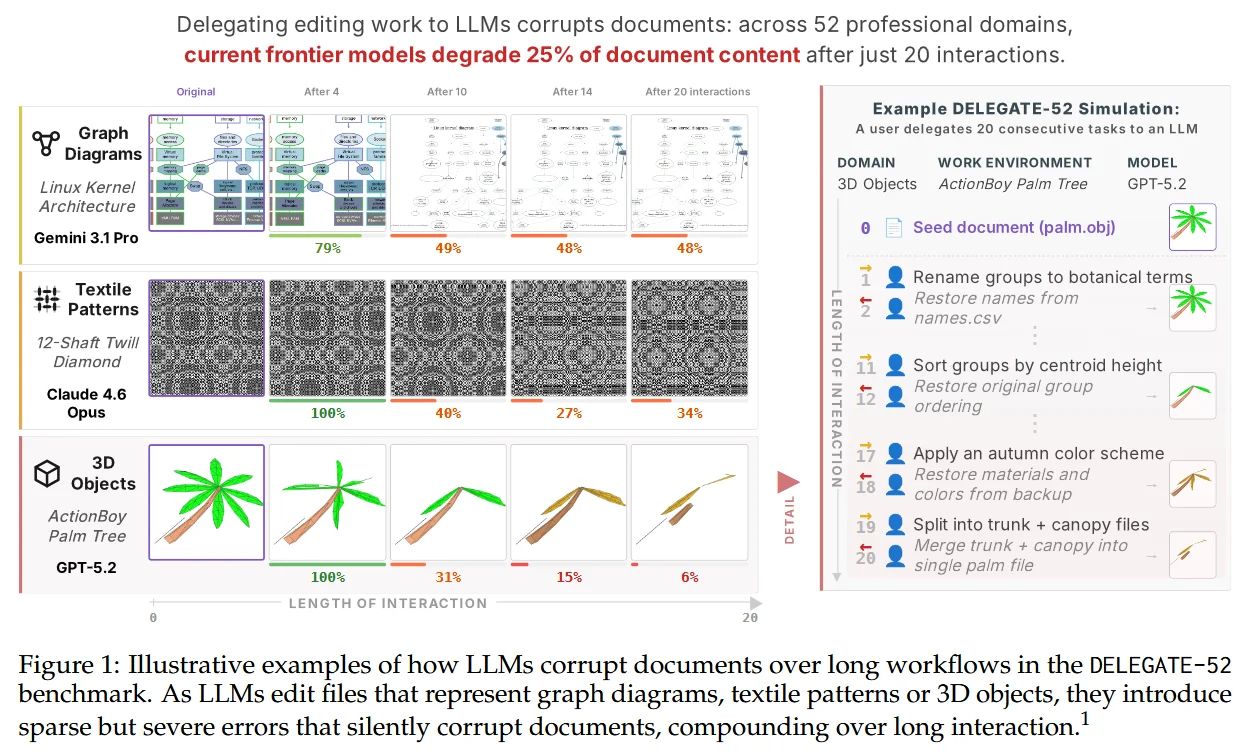

The Hidden Corruption Tax of AI Delegation

Frontier LLMs corrupt 25% of what you delegate. The fix isn't going back to writing by hand. It's the same linters and CI we built for humans, finally pointed at the new worker.

Read more →

How Much Of A Library Do You Actually Use?

Armin Ronacher's 'Build It Yourself' got reposted after the npm attacks. The advice is right. The hard part is the question nobody quite answers: which dependencies do you actually use?

Read more →