The Code Review Bottleneck Is You

The software pipeline has always had a bottleneck. For decades it was writing code. AI fixed that. Now it's the step right after.

Every software team runs the same pipeline: write code, test it, review it, merge it. For decades, the bottleneck was the first step. A developer could write maybe two PRs a day. Review kept up easily because there wasn’t much to review. Testing and merging were automated. The pipeline was balanced.

AI blew up the first step. A developer with AI can produce five or six PRs a day. But a reviewer can still only handle the same number they always could. The pipeline is no longer balanced.

Before AI — writing was the limiting factor, review kept up:

After AI — writing produces far more than review can absorb:

What our merge queue shows

At Mergify, we build merge automation. Our core product repo is our canary. Here’s what the writing step looks like, measured in human-authored PRs per engineer per working day (excluding bots):

- 2023: 1.3 PRs/engineer/day

- 2024: 1.5 (+15%)

- 2025: 1.7 (+13%)

- 2026: 2.7 (+59%, on pace)

Output per engineer more than doubled in three years. The jump from 2025 to 2026 alone is almost 60%, which correlates with the leap in model capabilities over the same period. The team didn’t get twice as good at coding. They got 2026-era AI models.

My own numbers make it even clearer. In 2023, I merged 120 PRs. In 2024 and 2025, around 330 each. In 2026, I’m on pace for ~650: double my previous rate, almost entirely AI-assisted. The writing step got cheap. But every one of those PRs still needs a human review, and that’s where the math breaks down.

The pipeline needs rebalancing

The instinct when the review queue grows is to review faster. Skim more, approve more, trust the green CI badge. That’s how you ship bugs.

The real answer is to rebalance the pipeline. When the writing step was slow, it made sense for every PR to get the same level of review. There wasn’t that much volume. Now that the writing step produces 2x the output, you need a review process that can triage.

Not every PR deserves the same scrutiny. A dependency bump that passes CI, dependency scanning, and has a clean changelog? Auto-merge it. A pure refactor where the tests are green? Spot-check and ship. Save your deep review cycles for the PRs that change behavior, touch shared interfaces, or modify things that are hard to roll back.

The nature of review changes too. When AI writes the code, correctness (at least for syntax and basic logic) tends to be fine if the tests pass. The real question shifts to “is this the right approach?” You’re reviewing architecture decisions, not indentation. Reading less line-by-line, asking more questions about why.

There’s a deeper principle here. As apenwarr wrote, the job of a code reviewer isn’t to review code. It’s to figure out how to make their review comment obsolete. Think of go fmt: one tool, and an entire class of style comments vanished from code review forever. Good linters do the same for conventions. The best reviewers don’t just catch problems, they engineer the problems away so nobody has to catch them again.

And if you don’t trust your test suite enough to auto-merge trivial changes, you don’t have a review problem. You have a testing problem. The test suite is the first reviewer now. It needs to act like one.

AI review tools help. They’re not enough.

Tools like CodeRabbit, Greptile, and Qodo are solving real problems. They catch bugs, flag security issues, enforce conventions. I use them. They help.

But they solve the mechanical part of review: “does this code do what it says?” They don’t solve the judgment part: “should we be doing this at all?” That question still requires a human who understands the product, the architecture, and the tradeoffs. No AI reviewer is going to tell you that a perfectly correct refactor is a waste of time because the feature is getting killed next quarter.

The tools raise the floor. But the ceiling is still you. And on most teams, the ceiling is one or two senior engineers who are the only ones trusted to review certain areas of the codebase. AI doesn’t change that concentration. If anything, it makes it worse: more PRs, same two people signing off.

Where the bottleneck lands next

When I have seven Claude sessions running in parallel, each producing a PR with tests, the theoretical output is enormous. The practical output is whatever I can review and merge before context-switching kills me. As I wrote in The Flow Is Gone, that parallel setup degrades the depth of your own judgment. You’re producing more and reviewing worse at the same time.

Waydev reports that “more code, fewer releases” is the engineering leadership blind spot of 2026. Teams are writing more code than ever and shipping at the same pace or slower. The bottleneck moved, and most teams haven’t noticed.

The pull request was designed for a world where the writing step was slow. A developer opened one or two PRs a day, and a teammate reviewed them over coffee. That world is gone. I wrote about GitHub exploring the same question recently. The whole industry is circling the same realization: the tooling was built for the old bottleneck.

We’re rethinking this at Mergify. Risk-based review routing, automated triage by change type, smarter defaults for what needs human eyes and what doesn’t. I don’t have the full answer yet, but I know the current model, where every PR gets the same review ritual regardless of risk, is already broken. I’ll write more as we learn.

What’s actually hard

The pipeline always had a bottleneck. For 20 years, writing code masked everything else. It was slow enough that requirements, prioritization, architecture, and review all had time to keep up. Now that writing is fast, every other weakness is exposed.

Deming figured this out in manufacturing decades ago: adding inspection layers creates false security while hiding root causes. The Toyota Production System didn’t work because of more reviewers. It worked because of trust. The same principle applies to code review in 2026.

AI won’t kill junior engineers. But it will expose the seniors who stopped growing at the wrong layer. I used to be proud of how quickly I could ship. Now I’m proud of how many PRs I send back.

Related posts

How Much Of A Library Do You Actually Use?

Armin Ronacher's 'Build It Yourself' got reposted after the npm attacks. The advice is right. The hard part is the question nobody quite answers: which dependencies do you actually use?

Read more →

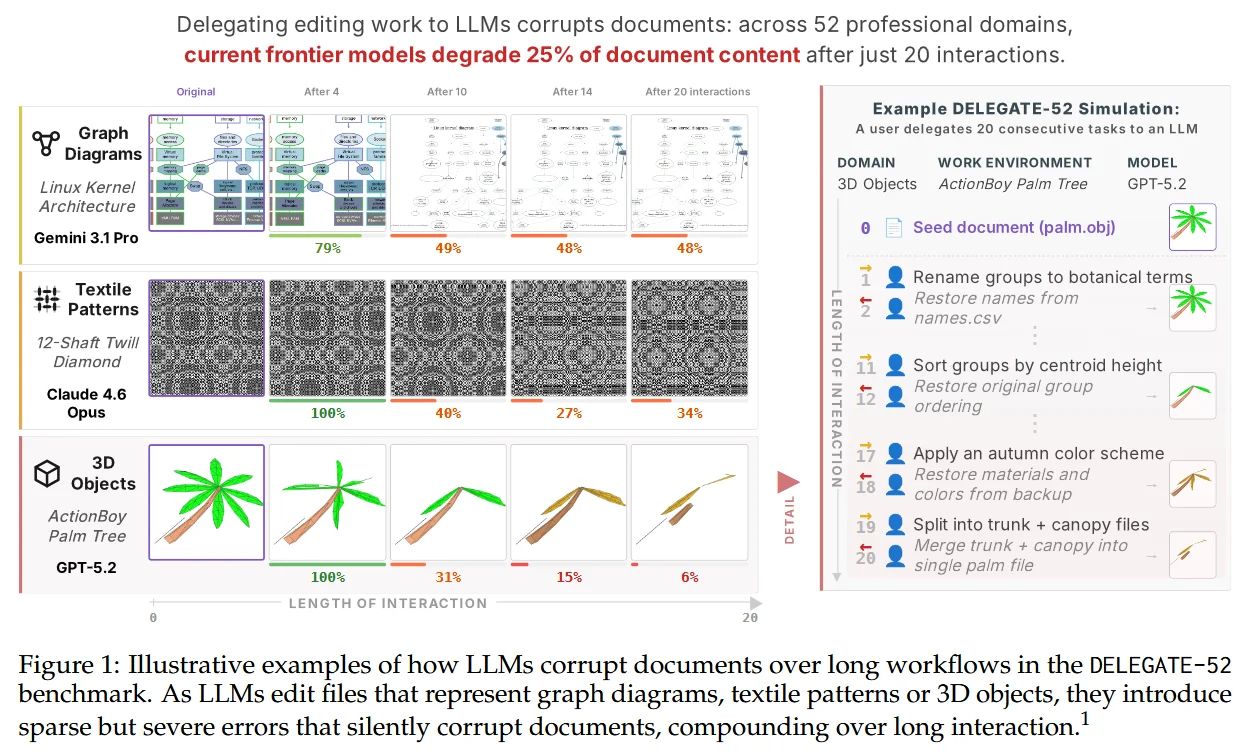

The Hidden Corruption Tax of AI Delegation

Frontier LLMs corrupt 25% of what you delegate. The fix isn't going back to writing by hand. It's the same linters and CI we built for humans, finally pointed at the new worker.

Read more →